PHASE III – Quantum Visual Music

Phase III extends quantum tomographic data into the visual domain, asking whether the same quantum hardware execution that drives the music can simultaneously generate coherent visual art. Full quantum state tomography across three measurement bases extracts Bloch sphere trajectories across 600 parameter steps per schema, animating quantum filaments in a stereoscopic VR environment rendered across a high-performance GPU cluster. The organic coherence between the visual and audio material — both rooted in identical quantum hardware execution — validates the core premise of the research.

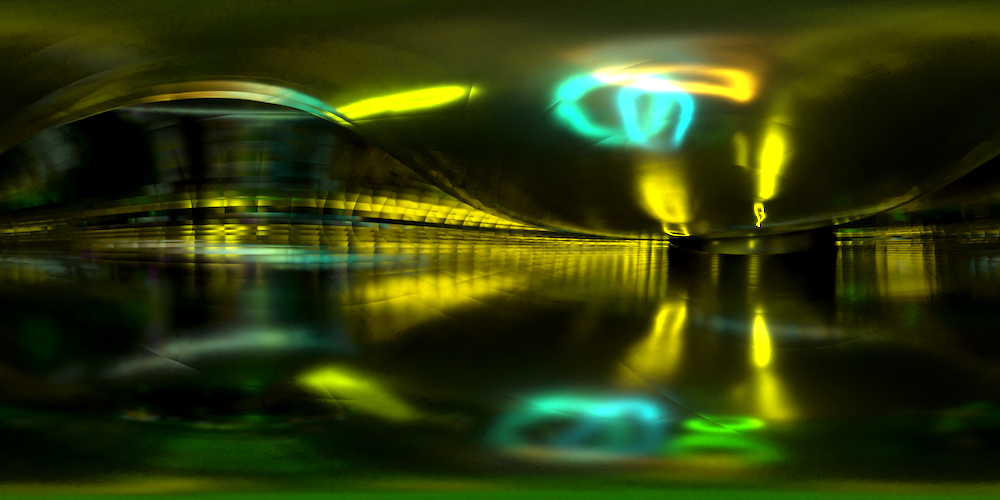

The following preview offers a first look at “Dance of the Qubits”, a unified audio/visual composition currently in development. The first four of eight circuit schemas from Phase III are presented here, each executed on Quantum Inspire’s Tuna-9 quantum processor in Delft, Netherlands. The visual objects and environments are not representations of quantum particles, but their movements, interactions, and spatial positions are exact mathematical transductions of Qubit pre-measurement states… authentic records of quantum entanglement, decoherence, and hardware noise during circuit execution. The audio waveforms and their movements through three-dimensional space are transduced from the same quantum data. Creative license shapes the pacing and mixing and quantum physics provides everything else. This is quantum computing as a creative instrument.

Enjoy a preview of Dance of the Qubits!

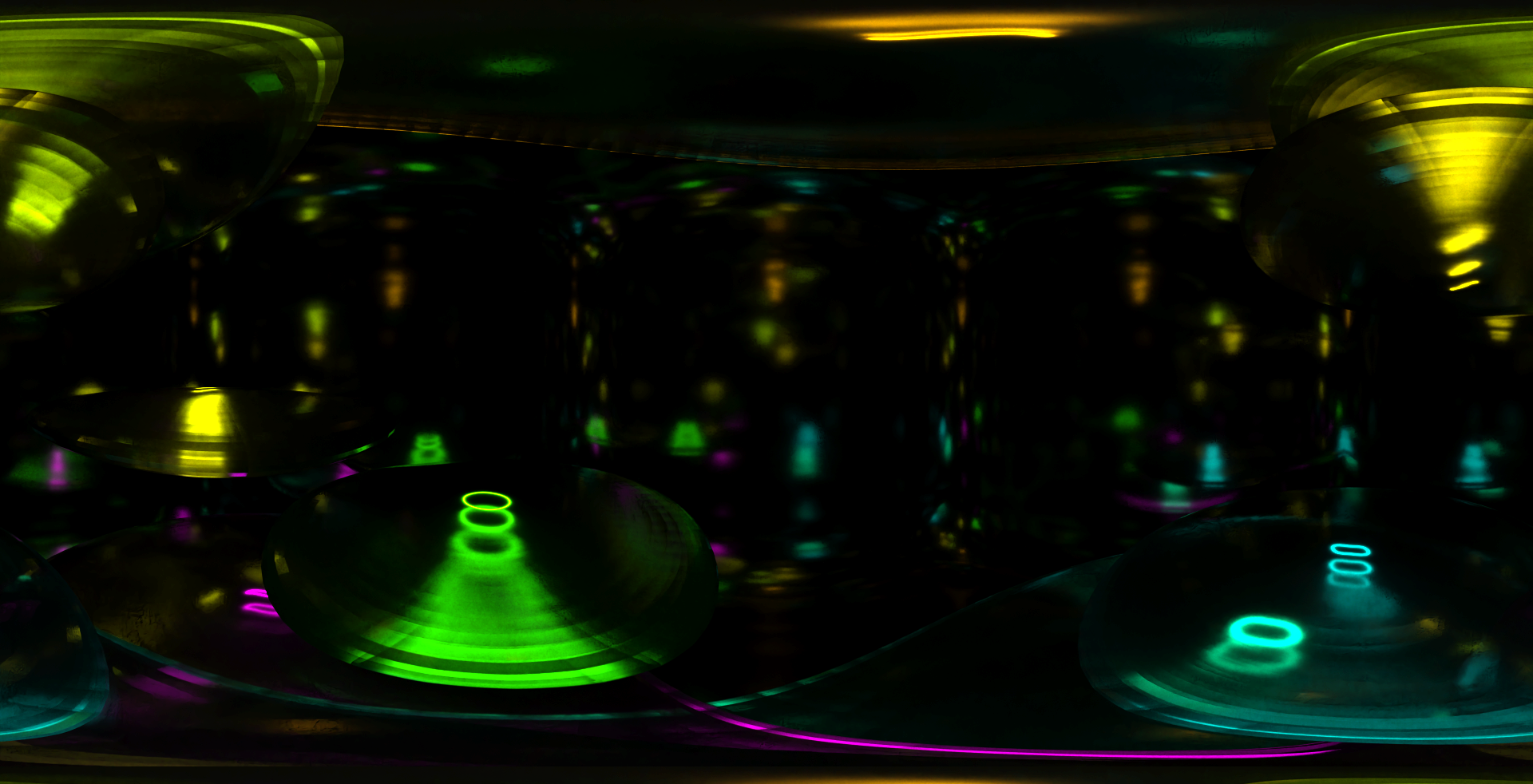

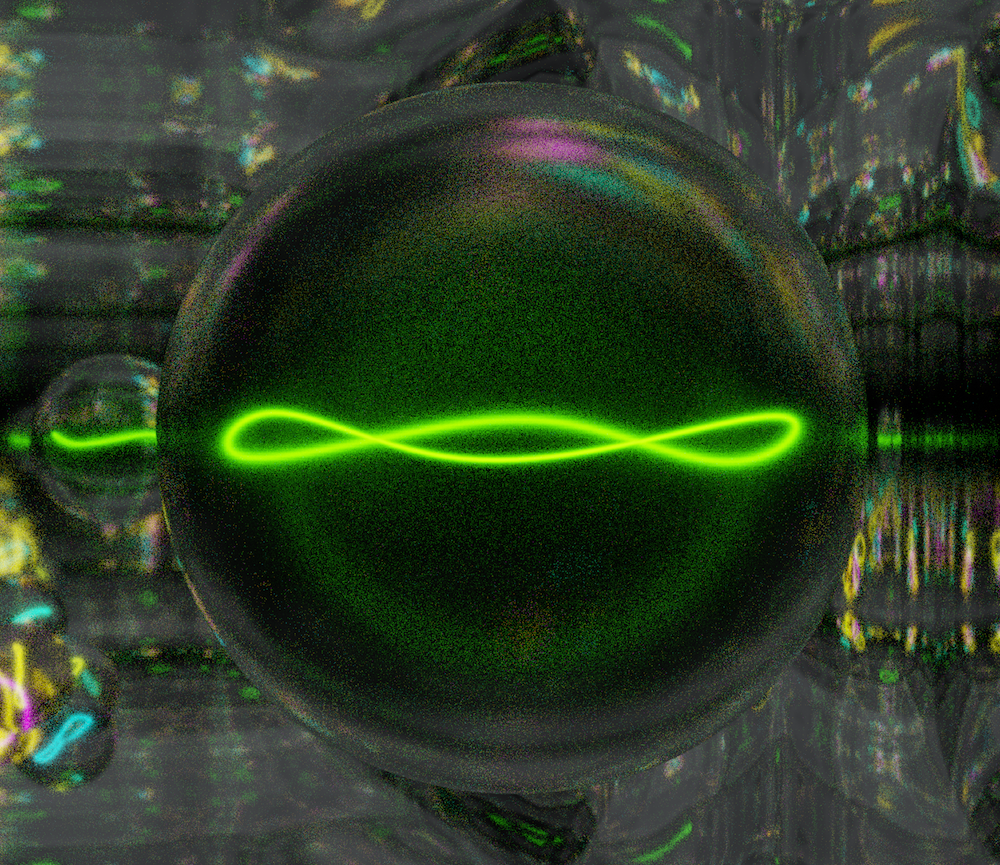

This video is the result of quantum circuit execution on a quantum computer. Eight animated filaments, each encapsulated within their own energetic spheroid, display each qubit’s trajectory within the quantum processor. The confinement seen here is real — these are actual quantum states bouncing around within real quantum hardware. Because the qubits are entangled, they are not independent paths but eight expressions of a single unified quantum system. The entanglement relationships are visible as the qubits come together and move apart. This is not a metaphor for quantum physics. It is quantum physics, rendered directly into perceptual space.

This is an extremely rough cut of the first video sequence render for the quantum computer music composition, “the Infinite and the Infinitesimal”. No editing is involved. Simply checking the viability of the audio and video working together. Even though the music is slowly evolving and the visual objects are moving quickly, there is some, indefinable, cohesion between the two. This is likely due to the fact that they are derived from the same basic quantum computer circuit executions.

Michael Rhoades, PhD (PI)

Introduction

Phase III of the Quantum Computational Creativity research program extends the transduction methodology established in Phase II into the visual domain, asking a fundamental question: can the same quantum hardware execution that generates music simultaneously generate coherent visual art? The answer, as this phase demonstrates, is not only affirmative but reveals an unexpected and profound organic coherence between the two sensory domains — one that could only emerge from a shared quantum physical origin.

Where Phase II transduced quantum circuit evolution directly into audio waveforms, Phase III employs full quantum state tomography to extract the complete three-dimensional trajectory of each qubit’s quantum state as it evolves across the parameter space. These trajectories — expressed as coordinates on the Bloch sphere — are then translated into the spatial and temporal parameters of a stereoscopic VR animation, rendered across a high-performance GPU cluster. The result is a time-based visual artwork whose motion, rhythm, and structure are as authentically quantum-derived as the compositions that accompany it.

Background and Motivation

The Bloch sphere is the natural geometric representation of a single qubit’s quantum state. Any pure qubit state can be represented as a point on the surface of a unit sphere, with the north pole representing |0>, the south pole representing |1>, and all points on the surface representing superposition states. The X, Y, and Z coordinates of this point — derived from measurements in three complementary bases — provide a complete description of the qubit’s state. As circuit parameters evolve, each qubit traces a continuous trajectory across this sphere, encoding the full dynamics of the quantum system in geometric form.

This geometric richness makes quantum state tomography a natural foundation for visual art. The trajectories are not arbitrary — they are shaped by entanglement topology, gate sequences, and hardware-specific noise characteristics. Different circuit schemas produce fundamentally different trajectory geometries, reflecting the unique quantum physical processes occurring during execution. Like the audio transductions of Phase II, these visual trajectories carry the authentic signature of real quantum hardware — impossible to replicate through classical simulation.

Technical Framework

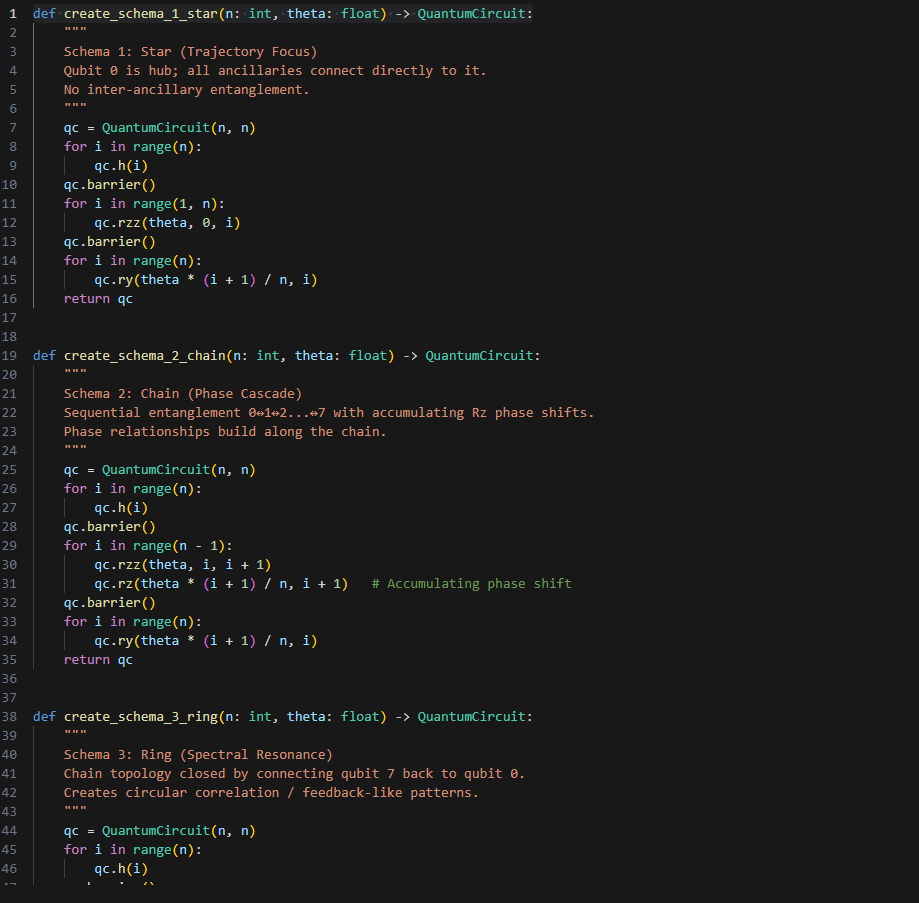

Quantum State Tomography

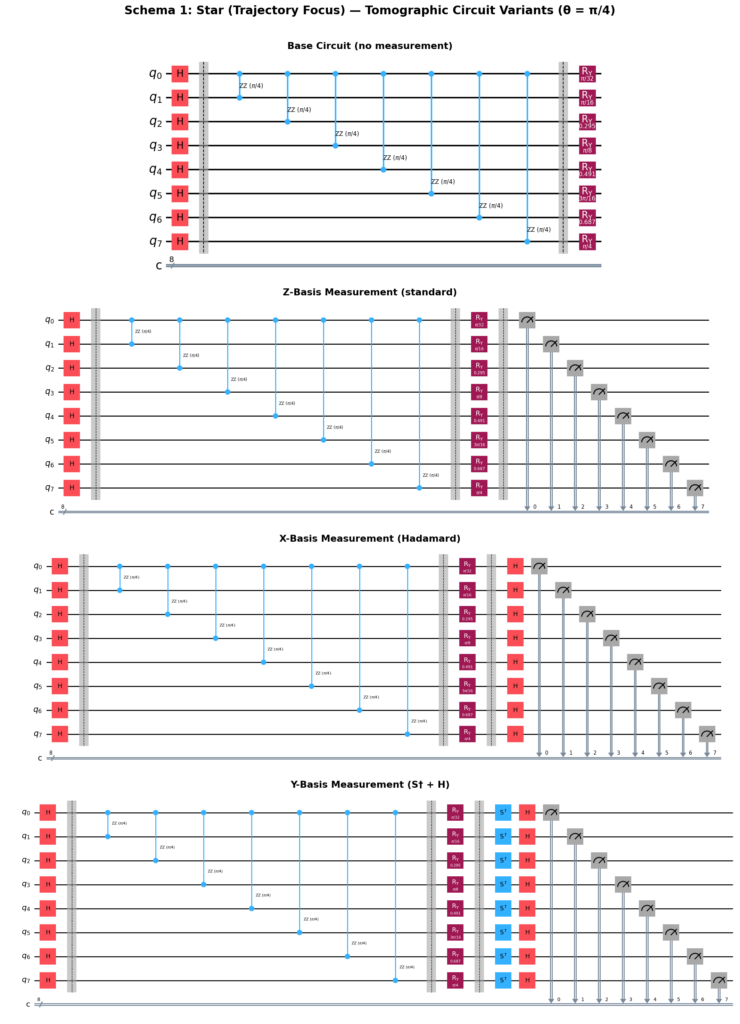

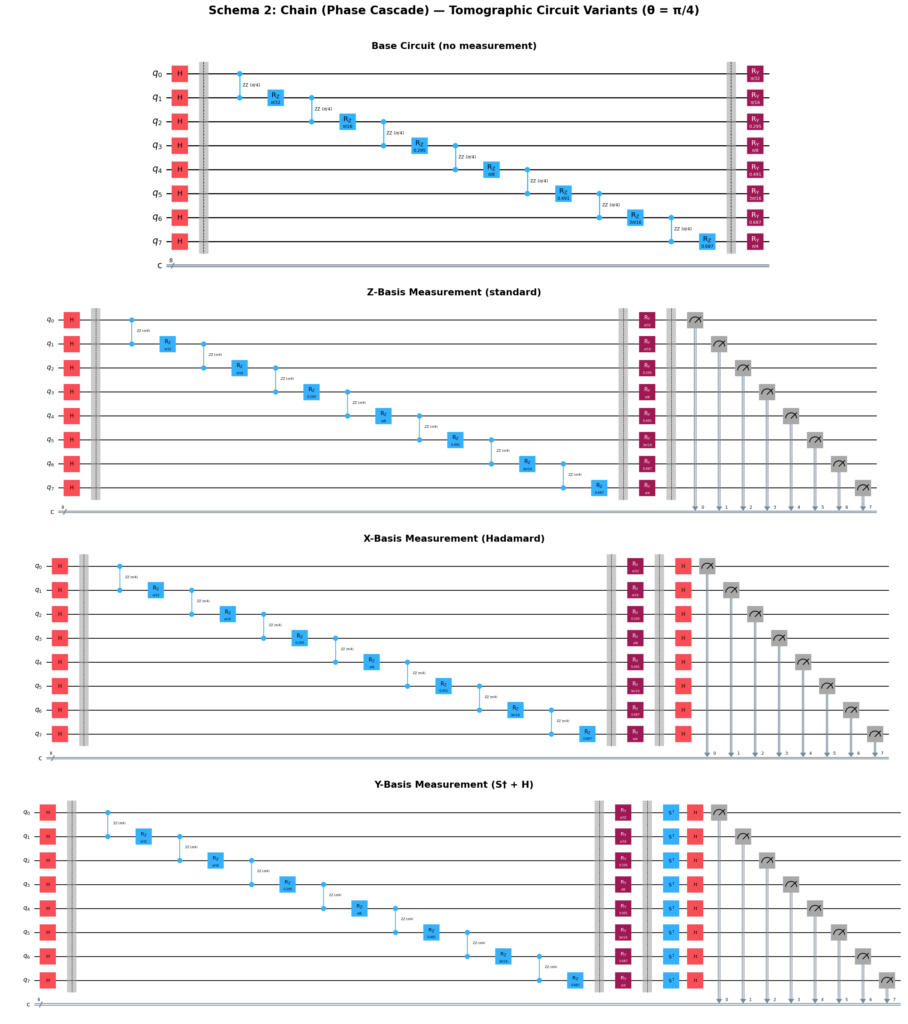

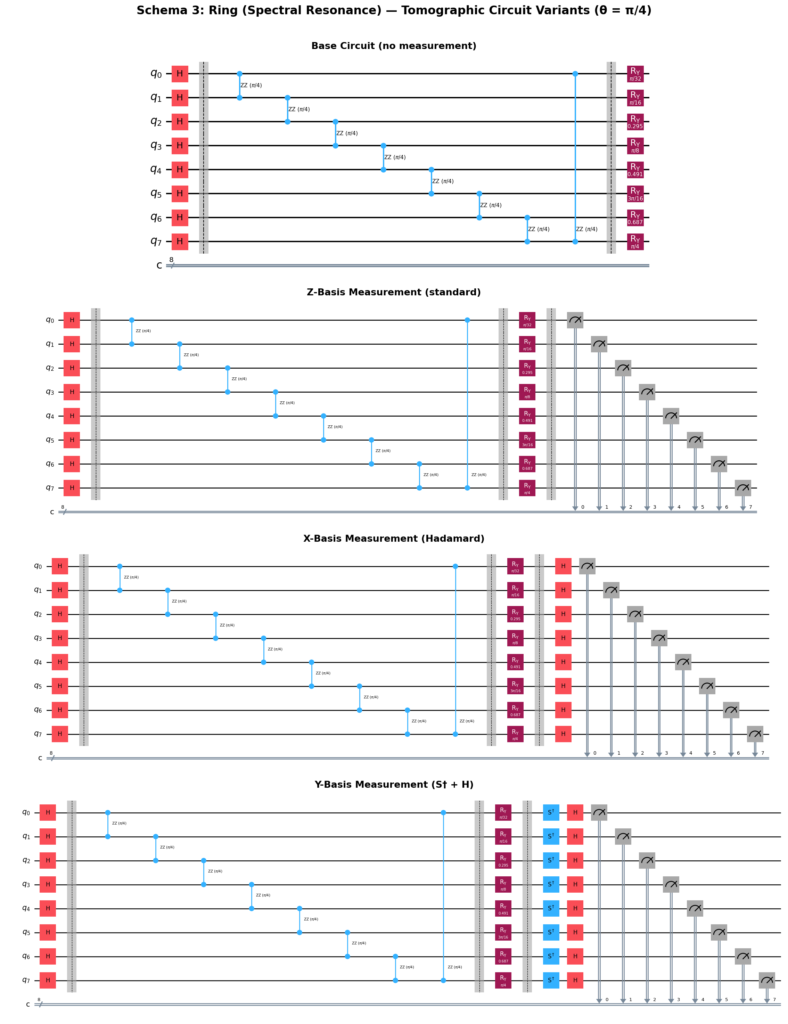

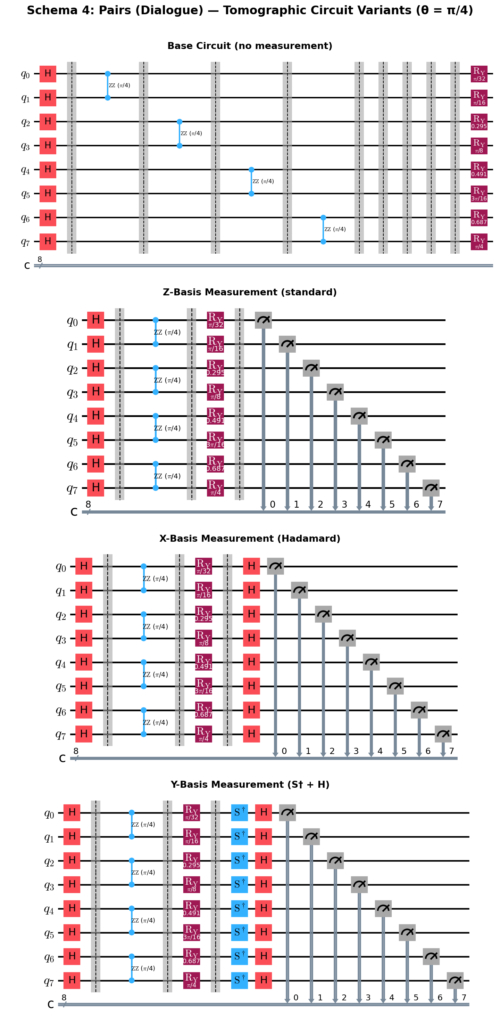

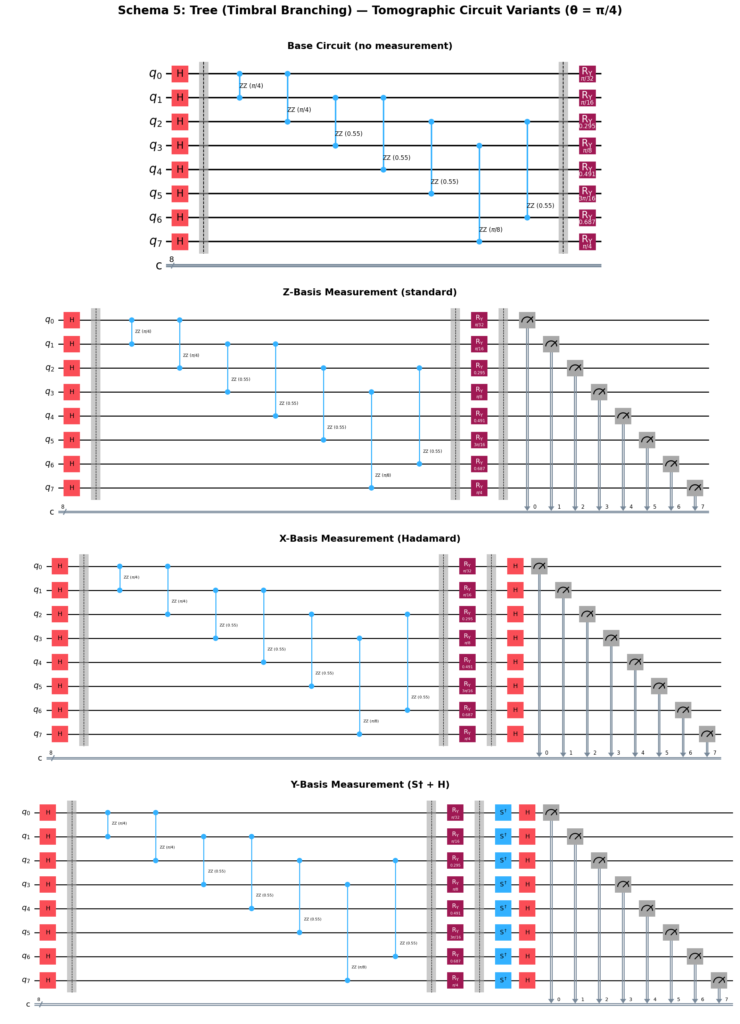

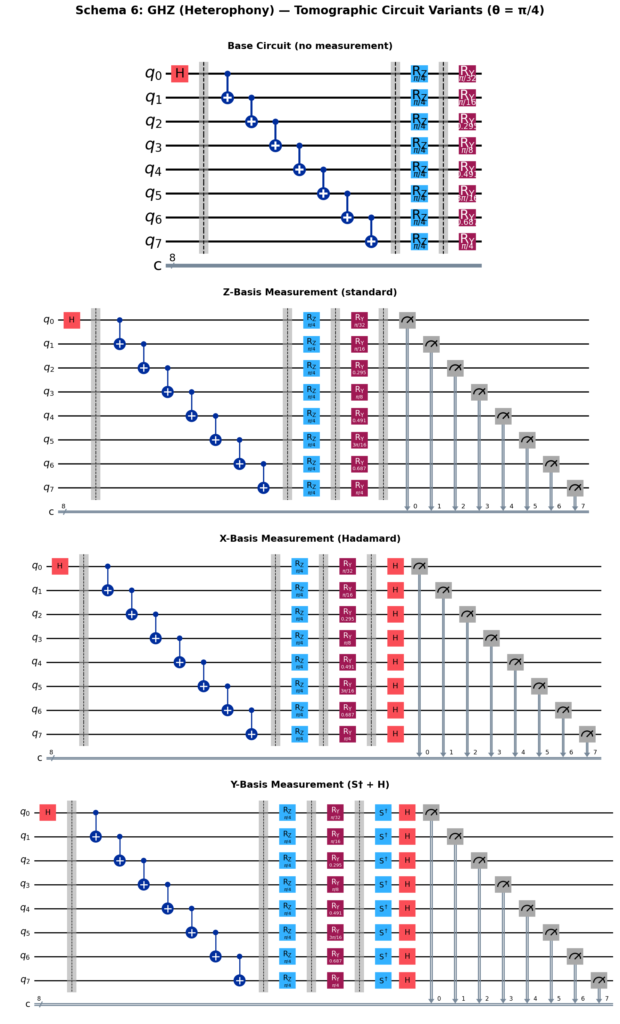

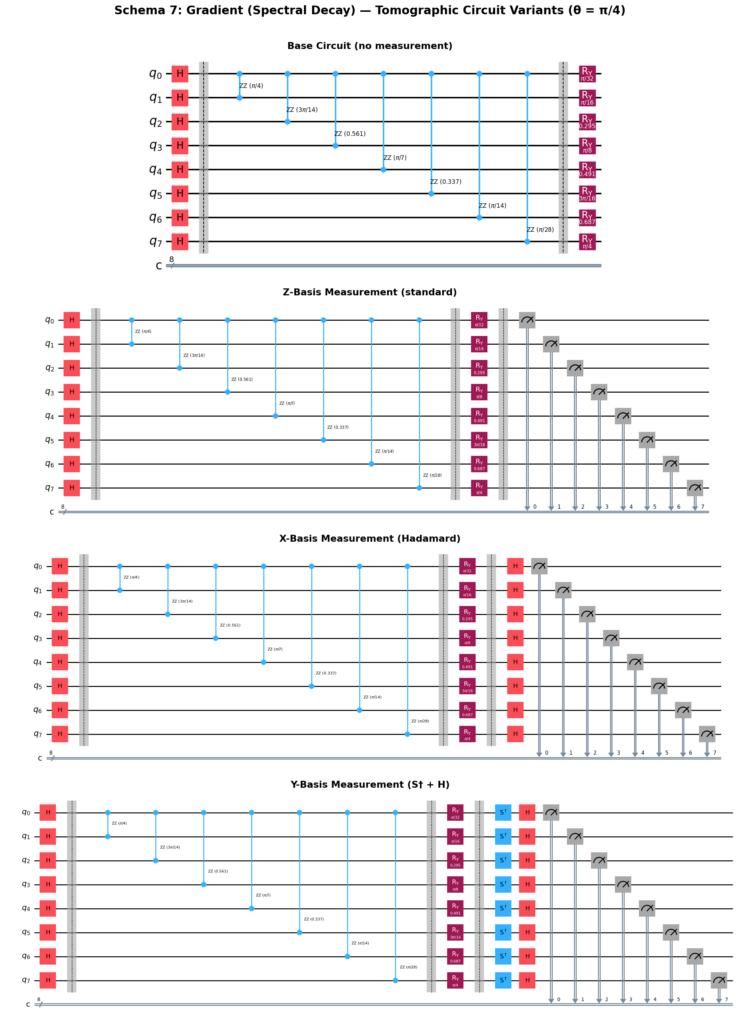

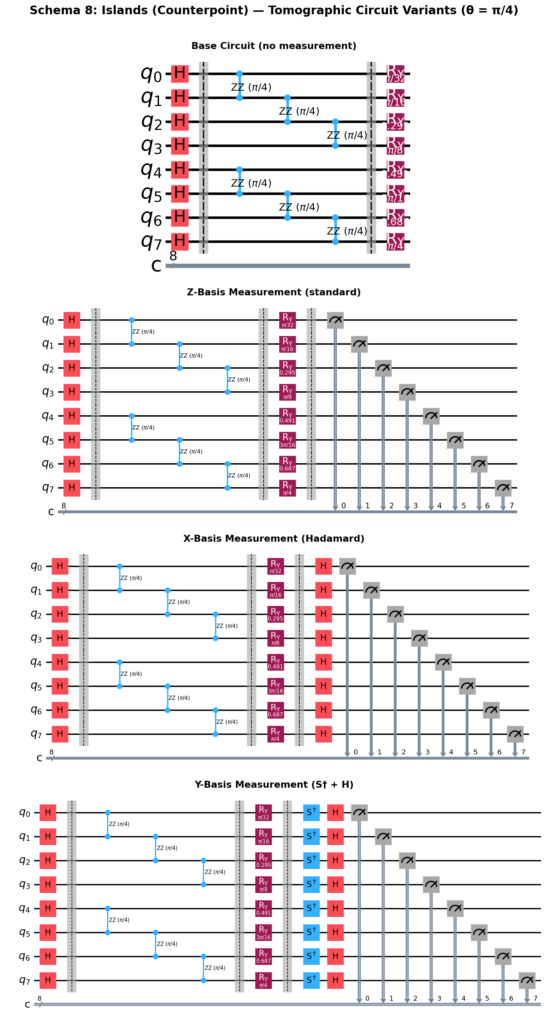

Full quantum state tomography was performed across all eight entanglement schemas from Phase II, executed on Quantum Inspire’s Tuna-9 processor. For each schema, 600 parameter steps were measured across three complementary bases:

- Z-basis: Standard computational basis measurement — reveals the north/south position on the Bloch sphere

- X-basis: Hadamard gate applied before measurement — reveals the east/west position

- Y-basis: S† gate followed by Hadamard applied before measurement — reveals the front/back position

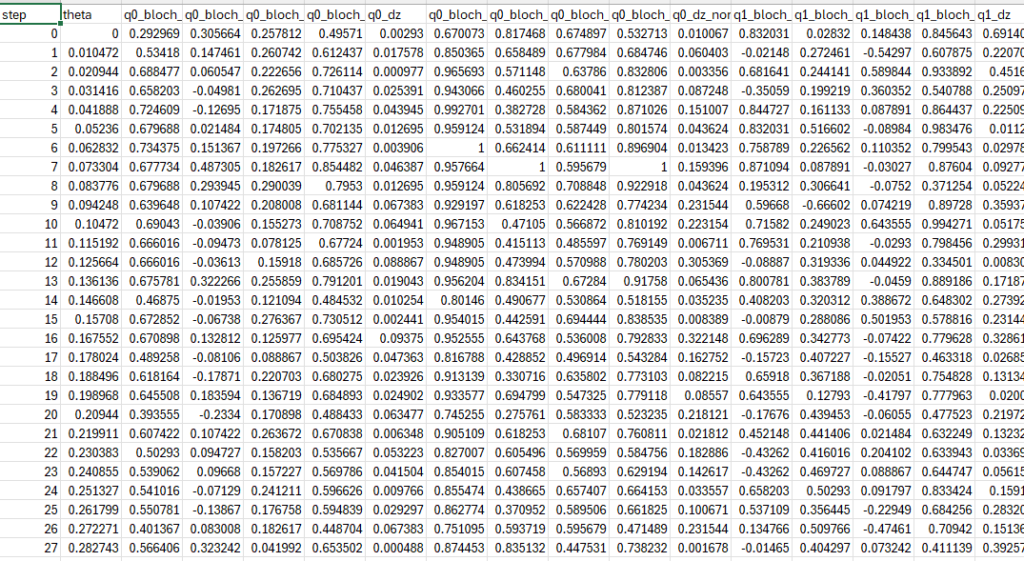

Each measurement basis required a complete set of 600 circuits executed at 2048 shots per circuit, yielding three full datasets per schema. Combined, the three basis measurements provide the complete Bloch sphere coordinates (X, Y, Z) for each of the eight qubits at every parameter step — 600 points tracing each qubit’s trajectory through the full parameter evolution.

Data Processing Pipeline

Raw measurement counts from the Tuna-9 hardware are processed into expectation values for each qubit at each parameter step. From these expectation values, three derived quantities are calculated:

- Bloch X, Y, Z coordinates: The three-dimensional position of each qubit’s state on the Bloch sphere, normalized to the range [−1, 1]

- Bloch radius (purity): The distance from the center of the sphere, calculated as √(X² + Y² + Z²). A radius of 1.0 indicates a pure quantum state; values less than 1.0 indicate mixed states resulting from decoherence and entanglement

- Rate of change (dZ): The frame-to-frame velocity of the Z coordinate, used to drive vibrational and dynamic parameters in the animation

These processed coordinates are exported as CSV files — one per schema — serving as the direct input to the Blender animation pipeline.

Circuit Schemas — Eight Entanglement Topologies

The eight schemas from Phase II each produce a distinct tomographic dataset, reflecting their unique entanglement architectures. Each schema’s visual character in the animation is a direct expression of its quantum physical structure:

Schema 01 — Star

A central hub qubit entangled with all seven peripheral qubits simultaneously. Produces radially symmetric trajectory patterns with the hub qubit exhibiting maximum decoherence from collective entanglement pressure.

Schema 02 — Chain

Sequential entanglement cascading from qubit 0 through qubit 7 with accumulating phase shifts. Produces wave-like trajectory propagation across the qubit array, with later qubits showing increasingly complex phase relationships.

Schema 03 — Ring

Circular entanglement topology with the final qubit connected back to the first. Produces rotationally symmetric trajectories with periodic interference patterns arising from the closed loop structure.

Schema 04 — Pairs

Independent entangled pairs (0-1, 2-3, 4-5, 6-7) with no inter-pair coupling. Produces four distinct paired trajectory families, each evolving independently — creating visual counterpoint between the pairs.

Schema 05 — Tree

Hierarchical binary tree entanglement structure. Produces trajectories organized by depth level, with qubits at different levels of the hierarchy exhibiting characteristically different trajectory geometries.

Schema 06 — GHZ

Greenberger-Horne-Zeilinger maximally entangled state — all eight qubits in a single maximally entangled superposition. Produces the most dramatically correlated trajectories of all schemas, with collective quantum behavior dominating individual qubit dynamics.

Schema 07 — Gradient

Progressive entanglement strength increasing across the qubit array. Produces a smooth gradient of trajectory complexity from the weakly entangled end to the strongly entangled end of the chain.

Schema 08 — Islands

Two isolated entangled clusters with no inter-cluster coupling. Produces two visually distinct trajectory families coexisting within the same animation, creating a natural visual dialogue between independent quantum subsystems.

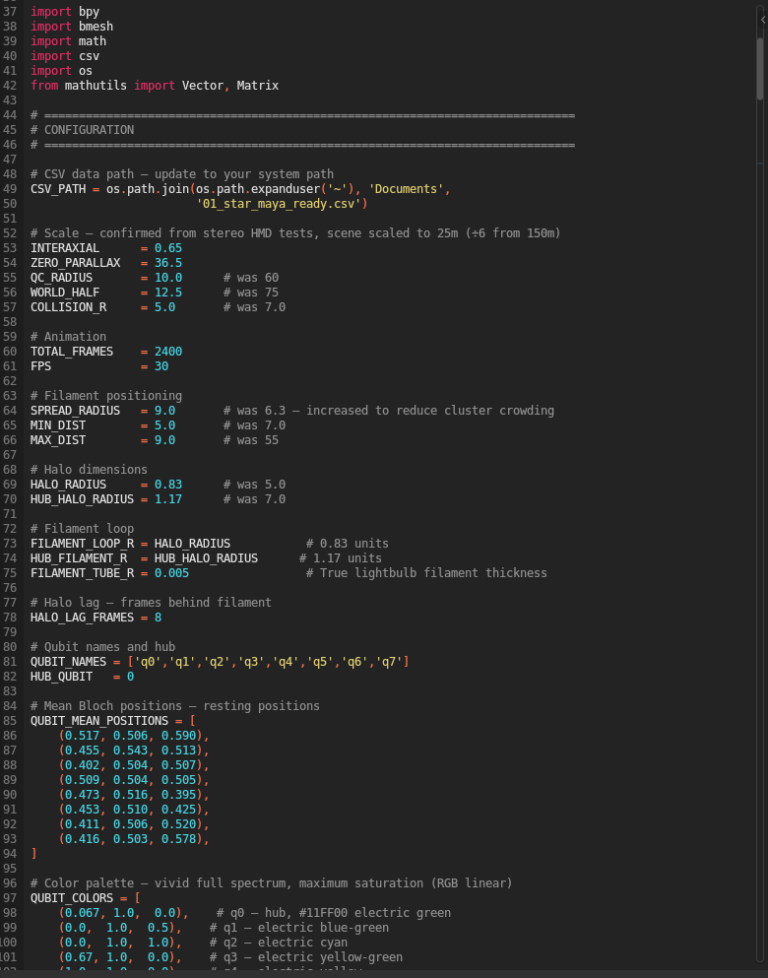

The Blender VR Animation Pipeline

Coordinate Mapping

The processed Bloch sphere coordinates are mapped to three-dimensional animation parameters within Blender. Each of the eight qubits is represented as a quantum filament — a luminous, volumetric strand whose spatial position, orientation, and dynamic behavior are governed entirely by the tomographic data:

- Spatial position: The X, Y, Z Bloch coordinates drive the three-dimensional position of each filament’s control points across the 600-frame animation sequence

- Bloch radius (purity): Maps to filament luminosity and thickness — high purity states produce bright, well-defined filaments while mixed states produce dimmer, more diffuse forms

- Rate of change (dZ): Maps to vibrational frequency and amplitude — rapid state evolution produces agitated, energetic filament behavior while slow evolution produces smooth, flowing motion

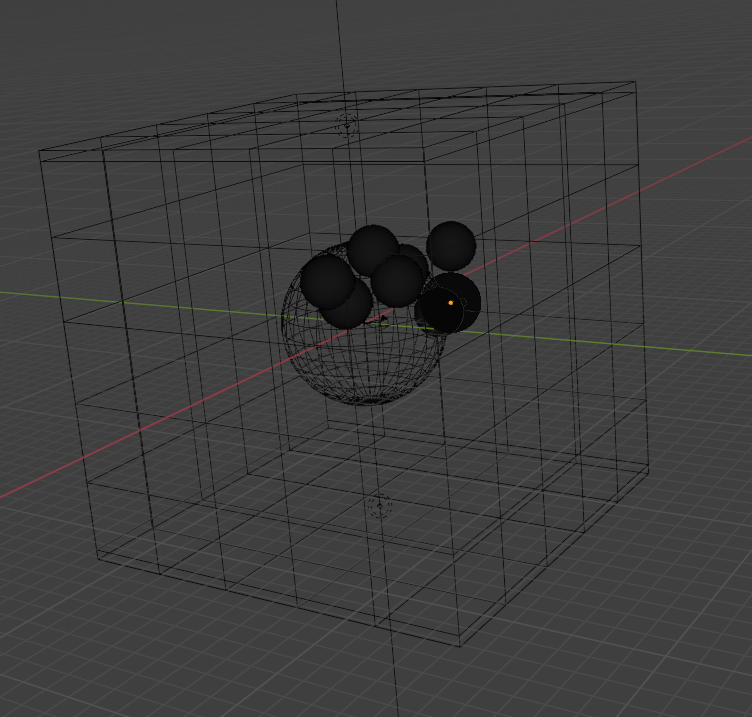

Scene Architecture

The animation environment is a chrome reflective VR cuboid — an infinite mirrored interior that multiplies and propagates the quantum filaments across the visual field, creating an immersive environment that suggests the infinite-dimensional nature of Hilbert space itself. Eight quantum filaments occupy the interior, their trajectories animated across 2400 frames at 30 frames per second, producing an 80-second stereoscopic VR animation.

The chrome environment was chosen deliberately — its reflective properties mean that the quantum filaments are never seen in isolation but always in relation to their own reflections and to each other, creating a visual analogy for quantum entanglement: each element simultaneously independent and inseparable from the whole.

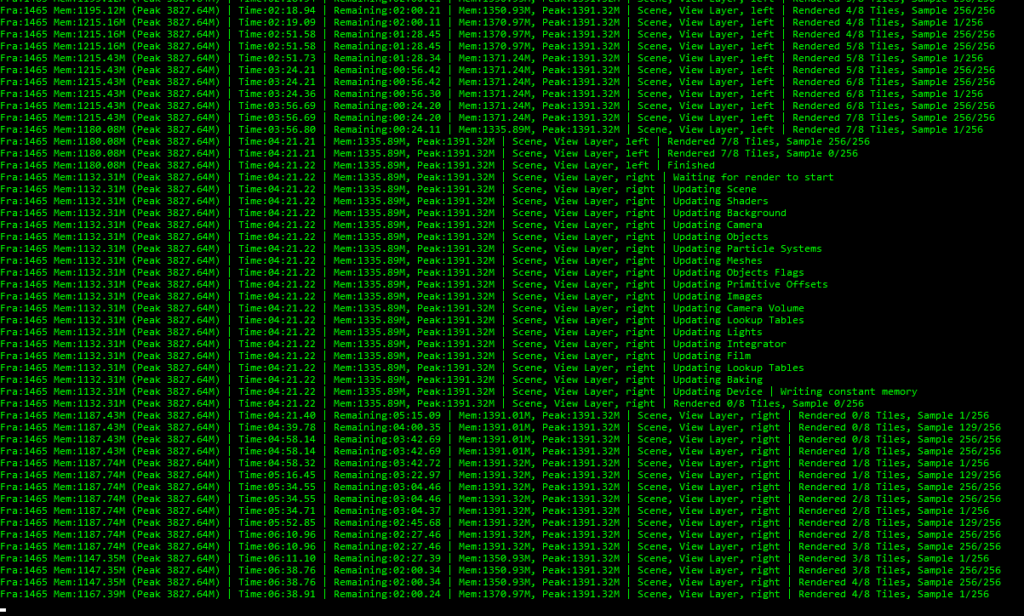

Rendering

The animation is rendered across a high-performance GPU cluster equipped with multiple NVIDIA RTX 4090 graphics cards, producing stereoscopic 3D frames at full resolution. The render pipeline was optimized for the specific requirements of the quantum filament geometry, with subdivision surface modifiers and volumetric glass materials producing the characteristic luminous quality of the final output.

Results — The Organic Coherence Discovery

The most significant and unexpected finding of Phase III emerged during review of the initial render output. When the first 689 frames of the Schema 01 Star topology animation were played back at quarter speed alongside the Phase II compositions, a striking organic coherence was observed with “The Infinite and the Infinitesimal” — the final and most fully realized composition of Phase II.

This coherence was not designed. No explicit synchronization was attempted between the visual and audio material. Both derive independently from the same quantum hardware execution — the audio from direct waveform transduction in Phase II, the visual from tomographic trajectory extraction in Phase III. Yet when experienced together, the slowly evolving four-movement structure of the composition and the fluid, continuous motion of the quantum filaments appear to inhabit the same temporal and expressive world.

This finding carries significant implications for the research program as a whole. It suggests that authentic quantum hardware execution produces a kind of perceptual unity across sensory domains — a coherence that emerges naturally from shared physical origin rather than from deliberate compositional synchronization. Classical simulation, which produces idealized and deterministic results, could not generate this quality. It is a property of real quantum hardware execution specifically, and it validates the core premise of the Quantum Computational Creativity research program.

The Phase III visual work achieves something that pure audio cannot — a direct, unmediated view of quantum entanglement in action. Rendered as eight animated filaments, each qubit’s trajectory is traced within the quantum computer processor. The qubits are constrained — what appears as confinement is real confinement, within the quantum processor itself. And because the qubits are entangled, the eight filaments are not eight independent paths. They are eight expressions of a single unified quantum system, their movements structured by the correlations built into the entanglement topology. The entanglements are actually seen as the qubits come together and move apart. The visualization does not interpret the physics through metaphor or artistic mediation. It is the physics, rendered directly into perceptual space.

Hardware Signature — Tuna-9 vs ibm_fez

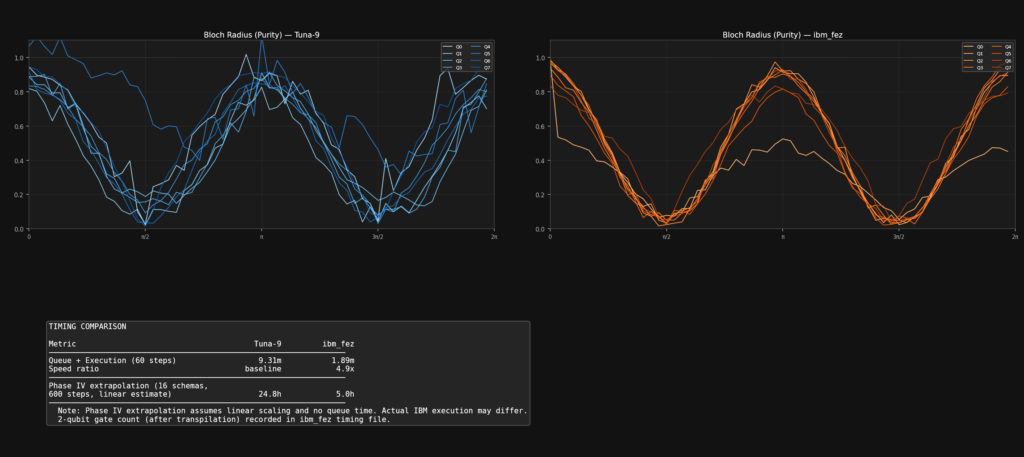

The Quantum Inspire Tuna-9 processor was instrumental in establishing and validating the core methodology of this research program, providing the hardware access that made Phases II and III possible. As the research evolves toward the dramatically expanded scale of Phase IV — sixteen schemas, nine qubits each, engaging 144 qubits simultaneously — IBM’s hardware architecture is the natural and necessary next step. To establish accurate compute time estimates for the Phase IV work and in support of an IBM Quantum Credits Program grant application, a direct benchmark comparison between Tuna-9 and IBM’s ibm_fez backend was conducted using identical 60-step Chain schema tomographic circuits.

The comparison revealed that ibm_fez completed execution in 1.94 minutes versus 9.31 minutes on Tuna-9 — a 4.8× speed advantage — while also producing significantly lower noise and higher qubit purity values. The smoother, more coherent trajectories of ibm_fez reflect its superior hardware fidelity, while Tuna-9’s characteristic noise signature gives the Phase II and III material its own distinctive and authentic quantum character.

Both hardware signatures are valid artistic voices. Phase IV simply requires the scale and fidelity that IBM’s architecture makes possible.

Validation

Phase III validates several key propositions of the Quantum Computational Creativity research program:

Quantum tomography as artistic medium: Full quantum state tomography, conventionally a scientific measurement technique, functions equally as a generative artistic process when its outputs are transduced into visual parameters. The scientific and creative applications are not merely compatible — they are identical.

Hardware authenticity as artistic value: The noise, decoherence, and hardware-specific signatures present in the tomographic data are not imperfections to be corrected but essential components of the visual character. Different hardware produces different art from identical circuits.

Cross-modal quantum coherence: Audio and visual material derived independently from the same quantum hardware execution exhibit organic coherence across sensory domains — a coherence unavailable through classical simulation and attributable specifically to shared quantum physical origin.

Scalability: The tomographic pipeline executed reliably across all eight schemas, producing complete 600-step datasets for each, demonstrating that the methodology is robust and scalable to the expanded circuit architectures planned for Phase IV.

Discussion and Future Directions

Phase III establishes time-based spatial visualization as a natural and essential component of the Quantum Computational Creativity methodology. The organic coherence discovery in particular opens new compositional possibilities — if audio and visual material derived from the same quantum execution naturally cohere, then a unified quantum audiovisual work becomes not merely possible but perhaps inevitable.

Phase IV will expand this visual methodology alongside the audio work, applying the tomographic pipeline to 16 circuit schemas across 9 qubits each on IBM’s hardware. The expanded qubit count, novel circuit topologies, and IBM’s superior hardware fidelity will produce visual material of substantially greater complexity and variety. The non-uniform parameter sampling strategies planned for Phase IV will give each schema’s visual trajectory an internal rhythmic life — phrases, accelerations, and clumping — that the evenly spaced Phase III trajectories could not produce.

The longer term vision is a fully integrated quantum audiovisual performance work: a single quantum hardware execution generating both the compositional material and the visual environment simultaneously, unified by their shared physical origin in Hilbert space.

Conclusion

Phase III demonstrates that quantum state tomography, executed on real quantum hardware, generates not only scientifically meaningful data but visually compelling artistic material whose character is inseparable from the physics of its origin. The eight entanglement schemas produce eight distinct visual languages, each reflecting its unique quantum topology. The chrome VR environment situates these trajectories within an immersive spatial context that invites the viewer into Hilbert space itself.

Most significantly, the organic coherence between the Phase III visual material and the Phase II audio compositions — both derived from identical quantum hardware execution — validates the foundational premise of this research: that quantum hardware is a generative artistic instrument capable of producing unified creative experiences across sensory domains, in ways that classical computing fundamentally cannot replicate.

Quantum Computational Creativity — Phase III Documentation — March 2026